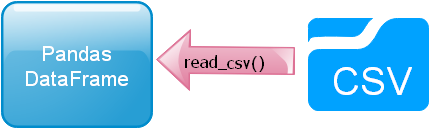

read_csv()

Read and display data from CSV (comma separated value ) file, student.csv file.

Read and display data from CSV (comma separated value ) file, student.csv file.

read_csv(): Create Pandas DataFrame using data from CSV file #B03

Download sample CSV file ( student )

import pandas as pd

df=pd.read_csv('C:\\data\\student.csv') # use your system path

print(df.head()) id name class mark gender

0 1 John Deo Four 75 female

1 2 Max Ruin Three 85 male

2 3 Arnold Three 55 male

3 4 Krish Star Four 60 female

4 5 John Mike Four 60 femaleindex_col

By default the DataFrame will add one index column, if we don't want it to add and use one of the column as index column then we can add like this.df=pd.read_csv('C:\\data\\student.csv',index_col='id')header

The first line in our example csv file is the column headers, this is same as header=0. The first row or 0th row will be treated as column headers.If we want to treat the first row as data and not as header then here is the code.

df=pd.read_csv('C:\\data\\student.csv',header=None) 0 1 2 3 4

0 id name class mark gender

1 1 John Deo Four 75 female

2 2 Max Ruin Three 85 male

3 3 Arnold Three 55 male

4 4 Krish Star Four 60 femaleThe file have one header row at top but we want to read only data ( not the headers ) or skip the header. Note that by using header=None we will include header as first row data. To remove the header will use skiprows=1

df=pd.read_csv('C:\\data\\student.csv',header=None,skiprows=1) 0 1 2 3 4

0 1 John Deo Four 75 female

1 2 Max Ruin Three 85 male

2 3 Arnold Three 55 male

3 4 Krish Star Four 60 female

4 5 John Mike Four 60 femaledf=pd.read_csv('C:\\data\\student.csv',header=None,skiprows=1,usecols=[3,4]) 3 4

0 75 female

1 85 male

2 55 male

3 60 female

4 60 femalenames

We can use our own headers while creating the DataFrame. Here is a list of headers and same is used by using names option.my_names=['my_id','my_name','my_class','my_mark','my_gender']

df=pd.read_csv('student.csv',names=my_names) my_id my_name my_class my_mark my_gender

0 id name class mark gender

1 1 John Deo Four 75 female

2 2 Max Ruin Three 85 male

3 3 Arnold Three 55 male

4 4 Krish Star Four 60 femaledf=pd.read_csv('student.csv',names=my_names,header=0)na_values

Using the option na_values we can mark data as NaN and indicate them by using isnull()blank_values = ["n/a", "na", "--"]

my_data=pd.read_csv("D:\\test-na_values.csv",na_values=blank_values)

my_data['status']=my_data['name'].isnull() # new column added

print(my_data)skiprows

Skip the first 6 rows and then read.df=pd.read_csv(path,skiprows=6) 6 Alex John Four 55 male

0 7 My John Rob Fifth 78 male

1 8 Asruid Five 85 male

2 9 Tes Qry Six 78 male

3 10 Big John Four 55 female

4 11 Ronald Six 89 femaleconverters

By using converters option we can parse our input data to convert it to a desired dtype using a conversion function.Here by using one function to_float() we have converted the mark column data to float value while reading the csv file.

import pandas as pd

def to_float(x):

return float(x.strip('%'))/100

#return int(float(x.strip('%'))) # as integer

df=pd.read_csv('C:\\data\\student.csv',converters={'mark':to_float})

print(df.head()) id name class mark gender

0 1 John Deo Four 0.75 female

1 2 Max Ruin Three 0.85 male

2 3 Arnold Three 0.55 male

3 4 Krish Star Four 0.60 female

4 5 John Mike Four 0.60 femalechunksize

For a large number of rows we can break in chunks while reading the file, here as an example the above csv file is opened with a chunksize=2. We can read part ( or chunk ) of the total rows by this.import pandas as pd

df=pd.read_csv('C:\\data\\student.csv',chunksize=3)

for chunk in df:

print(chunk) id name class mark gender

0 1 John Deo Four 75 female

1 2 Max Ruin Three 85 male

2 3 Arnold Three 55 male

id name class mark gender

3 4 Krish Star Four 60 female

4 5 John Mike Four 60 female

5 6 Alex John Four 55 maleAll options

pd.read_csv(filepath_or_buffer,

sep=’, ‘, delimiter=None, header=’infer’,

names=None, index_col=None, usecols=None,

squeeze=False, prefix=None, mangle_dupe_cols=True,

dtype=None, engine=None, converters=None,

true_values=None, false_values=None,

skipinitialspace=False, skiprows=None,

nrows=None, na_values=None, keep_default_na=True,

na_filter=True, verbose=False,

skip_blank_lines=True, parse_dates=False,

infer_datetime_format=False, keep_date_col=False,

date_parser=None, dayfirst=False, iterator=False,

chunksize=None, compression=’infer’, thousands=None,

decimal=b’.’, lineterminator=None, quotechar='”‘,

quoting=0, escapechar=None, comment=None, encoding=None,

dialect=None, tupleize_cols=None, error_bad_lines=True,

warn_bad_lines=True, skipfooter=0, doublequote=True,

delim_whitespace=False, low_memory=True,

memory_map=False, float_precision=None)From MySQL to CSV

This is a common requirement as we read data from MySQL database and then save the data in CSV file.We will further extend this script to read from CSV file and store data in MySQL database.

We are going to use sqlalchemy for our MySQL database connection.

We are first connecting to MySQL database by using our connection userid, password and database name ( db_name ). Then using read_sql() to run the query to get data from student table.

We are writing the data to CSV file by using to_csv().

In the 2nd part of the script we are reading the data from CSV file by using read_csv() and creating a DataFrame. Then we are creating the table by using to_sql(). Here is the complete code.

import pandas as pd

from sqlalchemy import create_engine

engine = create_engine("mysql+mysqldb://userid:password@localhost/db_name")

sql="SELECT * FROM student "

my_data = pd.read_sql(sql,engine )

my_data.to_csv('D:\my_file.csv',index=False)

### End of storing data to CSV file ###

### Reading data from CSV file and creating table in MySQL ####

student3=pd.read_csv("D:\my_file.csv")

my_data = pd.DataFrame(data=student3)

print(my_data)

### Creating new table student3 or appending existing table

my_data.to_sql(con=engine,name='student3',if_exists='append')Questions

- What is the purpose of the

read_csv()function in Pandas? - How do you read a CSV file using the

read_csv()function in Pandas? - What are the common parameters used in the

read_csv()function? - How can you specify the file path or URL of the CSV file to be read?

- How does the

read_csv()function handle missing or null values in a CSV file? - Can you specify a different delimiter or separator character for non-CSV files using

read_csv()? - What is the role of the

headerparameter inread_csv()? How can you handle cases where the CSV file has no header? - How can you limit the number of rows or columns to be read from a CSV file using

read_csv()? - How does the

read_csv()function handle different data types in a CSV file? - Can you provide an example of reading a CSV file with specific columns using the

usecolsparameter inread_csv()? - How can you skip rows or skip initial lines in a CSV file while reading it with

read_csv()? - Is it possible to customize the way

read_csv()handles date and time data? - Can you read multiple CSV files at once using

read_csv()? If yes, how? - What is the significance of the

index_colparameter inread_csv()? - How can you handle and convert categorical data while reading a CSV file with

read_csv()?

Data input and output from Pandas DataFrame

Pandas read_excel() to_csv() to_excel() to_string()

Sample student DataFrame

Subhendu Mohapatra

Author

🎥 Join me live on YouTubePassionate about coding and teaching, I publish practical tutorials on PHP, Python, JavaScript, SQL, and web development. My goal is to make learning simple, engaging, and project‑oriented with real examples and source code.

Subscribe to our YouTube Channel here

This article is written by plus2net.com team.

https://www.plus2net.com

Python Video Tutorials

Python Video Tutorials